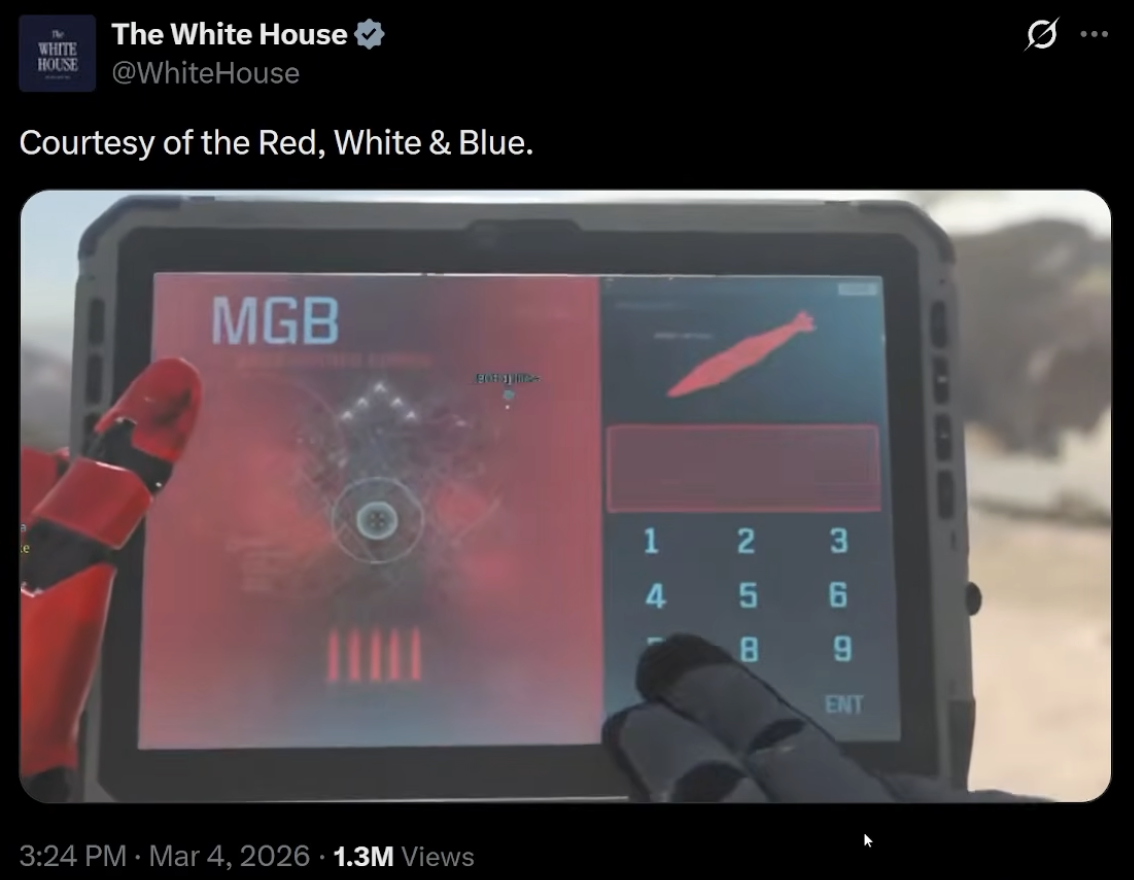

Over the past few weeks, the Trump White House has been publishing some takes. From the racist video about the Obamas to pushing a hockey feud with Canada using AI-generated images, and then trying to pump up their Iran war using video game graphics together with real combat shots, the administration is putting on a master class of sowing discord.

They don’t do it trying to get it onto the news. None of these spots were made for journalists, besides getting them to report on the very existence of the videos. All of them were made for social media feeds, where the average piece of content gets about 1.7 seconds of attention. 1.7 seconds isn’t enough to inform, but it is enough to provoke. It’s enough time to make you feel something before you can think about whether it's real.

And here's the truth: we started scrolling like that because the platforms were built for it by design.

Slowly at first, then all at once

There's a useful distinction that tends to be forgotten now: social networking and social media aren't the same thing. The shift that occurred in going from one to the other is where most of our current problems begin.

Social networking was active. You shared things with people you knew. They shared something, maybe you cared, maybe you didn’t. No matter what, it was communal, conversational.

Social media is passive. You scroll. An algorithm decides what you see, based not on who you know or what you asked for, but on what will keep you there the longest.

That shift didn't happen because users asked for it. It happened because their business model is time - your time, specifically, and how much of it they can capture.

When your revenue comes from advertising, three things matter above all else: how many users you have, how long they stay, and how much data you can collect to target them. User happiness doesn't appear anywhere in that equation. Neither does truth.

They've always known

In 2017, Facebook made a quiet change to its algorithm. Emoji reactions — love, wow, sad, angry — were given five times the weight of a regular like. The reasoning was straightforward: strong emotional reactions meant more engagement, and more engagement meant more time on the platform.

Their own researchers flagged the problem almost immediately. By 2019, Facebook's internal data scientists had confirmed it in writing: posts that received angry reactions were disproportionately likely to contain misinformation, toxicity, and low-quality content. Their own platform was systematically amplifying its worst content, and they knew it.

They kept it running anyway.

When the Facebook Files leaked in 2021, Meta made adjustments. Turned down the weighting. Issued statements about integrity teams. But their own data, when they finally zeroed out the angry emoji entirely, told the real story: misinformation dropped, harmful content dropped — and oh yeah, overall user engagement was completely unaffected.

And now they've stopped pretending

Fast forward to January 2025. Meta announces it's ending its independent fact-checking program — the one it had maintained for nearly a decade. Over 70 fact-checking organisations sign an open letter condemning the decision. Meta's own public acknowledgement of the consequences? That harmful content will inevitably increase.

They said that. Knowing it. And did it anyway.

(Fun fact: Sunslider was born in January 2025, in no small part due to this and other similar positions that were being taken by Meta and Mark Zuckerberg in the wake of Trump’s re-election, such as Zuckerberg’s statement that companies need more “masculine energy”. Pardon me a moment, I need to go chase my eyes down this hill, they seem to have rolled right out of my head.)

What did they replace those fact checkers with? Community notes — users collectively flagging misinformation. It sounds democratic. It doesn’t work. Twitter already showed why, as a study of one million community notes from the 2024 US election found that 74% of accurate corrections were never seen by users. We’re back to those 1.7 seconds: social media is built to amplify emotional content, and bury the corrections.

So their fix for their algorithms is defeated by their algorithms. Funny how that works.

The real insult

Meta generated $160 billion in advertising revenue in 2024. Not bad, but in 2025, they generated $195 billion! That’s almost half a trillion dollars in two years, thanks to a business predicated on keeping you on the platform as long as possible, feeling as much as possible, clicking as much as possible. Emotional content — whether true or invented — serves their goal better than calm content.

But is being glued to your phone doomscrolling your goal?

Before you answer, let’s think about what’s currently being introduced into this environment: the AI content flood. What was becoming visible six months ago is here in force: generated images, fabricated quotes, synthetic video, entire accounts that exist purely to provoke a reaction and sell you something on the back of it — a political view, a pair of jeans, a version of reality. The incentive structure that was already pushing toward outrage and misinformation now has an industrial-scale content machine behind it.

And again, the response from those giants is to make it your problem.

You — unpaid, untrained, scrolling through hundreds of posts a day — are now apparently responsible for fact-checking the feed that trillion-dollar companies are creating for you. I don't know about you, but I don't have the time or inclination to parse every piece of content I see to figure out whether it's real. That's not a reasonable thing to ask of anyone.

But it turns out that asking unreasonable things of users, while the advertising revenue keeps growing, is entirely consistent with how these platforms have always operated.

Circling back to that White House video.

It works. Not as journalism, not as reality — but as social media content, it works perfectly, and on all sides of the political spectrum! It makes people feel something before they have time to think. It gets shared before it gets checked. It lives in a feed that was specifically engineered to let exactly this kind of content thrive.

None of this is going to change until the business model changes. Platforms optimizing for engagement will always find that anger, outrage and fear outperform accuracy. That's not a bug. It's the inevitable output of the system.

At Sunslider, we started from a different place. No ads means no incentive to maximize your time in the app. No algorithmic feed means no system learning to push your emotional buttons. It doesn't solve the broader problem — we're not naive about that. But it's at least an honest attempt to build something that isn't working against you.